Research: Review is the new bottleneck

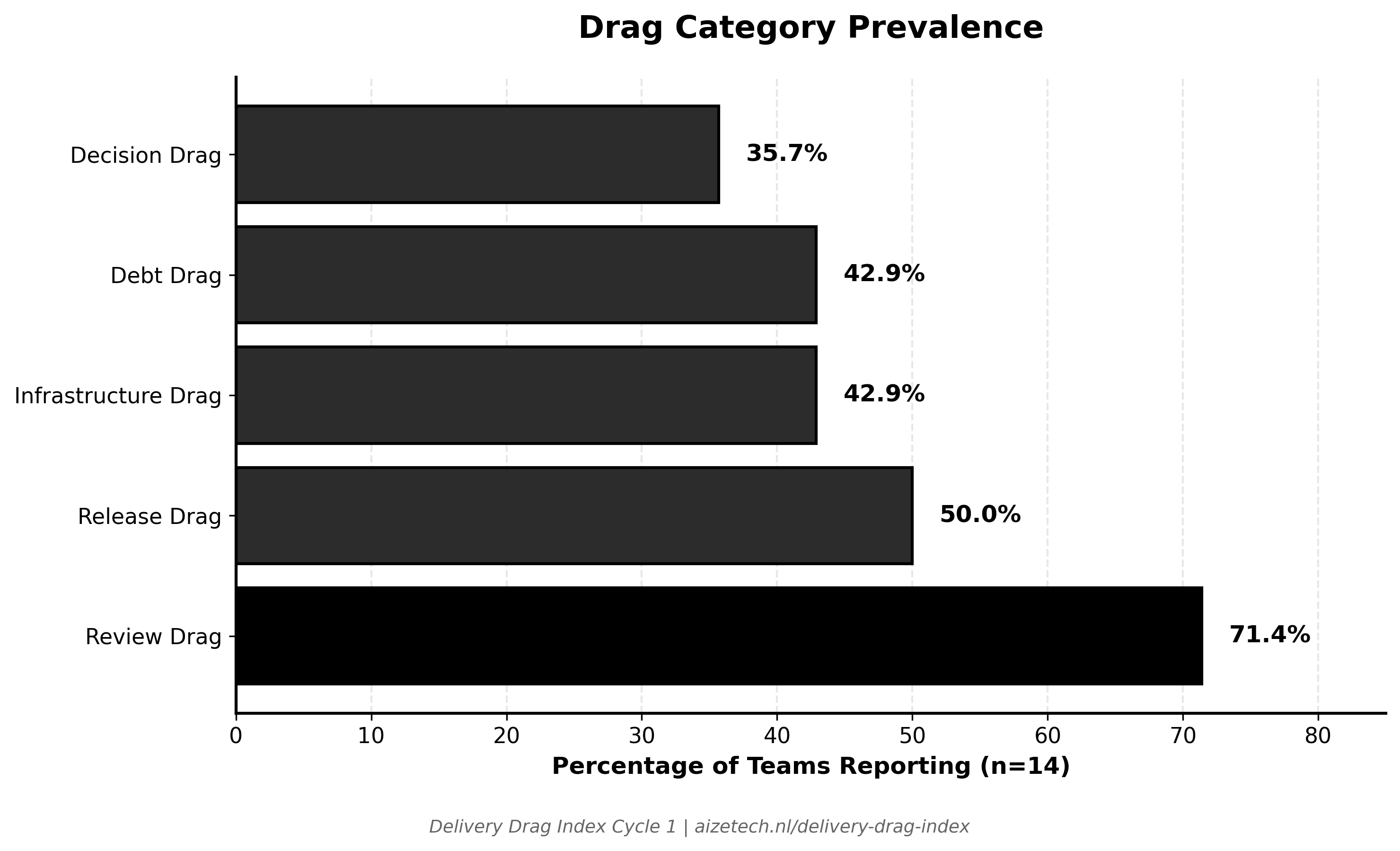

I built the Delivery Drag Index to measure where velocity leaks in engineering teams. After 14 valid responses, one pattern is already clear: review is the new bottleneck.

71% of teams report their average PR cycle time is longer than 24 hours. That’s not a tooling problem. That’s a capacity problem.

The data (n=14, preliminary)

This is early. The sample is small. But the pattern is consistent enough to pay attention to.

- 71% report Review Drag (PR cycle time >24 hours from first commit to merge)

- 86% lose 3+ hours per week per engineer to waiting (CI, builds, reviews, approvals)

- Average DDI score: 47.9/100

The most common bottleneck isn’t writing code. It’s getting code reviewed, merged, and shipped.

What shifted

AI made creation fast. But it didn’t make validation fast.

Teams can generate code faster than they can verify it, integrate it, and deploy it. The constraint moved downstream—from “can we build it?” to “can we safely ship it?”

This matches what I’m hearing from engineering infrastructure people. Repository count is growing without headcount growing. Greenfield projects move faster with AI. Brownfield projects struggle because AI doesn’t understand the legacy constraints and antipatterns already baked in.

Code generation capacity went up. Review capacity stayed the same. The queue got longer.

This isn’t hypothetical. It’s showing up in the data—even with a small sample.

The pattern is consistent

Every team scoring 70+ points has review drag. All of them.

Every team losing 10+ hours per week to waiting has review drag. All of them.

This isn’t statistical noise. When review becomes the bottleneck, everything else slows down. Lost time increases. Iteration cycles stretch. Other drags start compounding.

The manufacturing parallel

Just-In-Time manufacturing exposed downstream bottlenecks that batch production hid. If you speed up the assembly line but the packaging station stays the same speed, packaging becomes the constraint.

AI-accelerated development is doing the same thing to engineering teams. When you increase throughput at the creation layer, you expose the bottlenecks in the validation layer.

Review drag was always there. AI just made it visible by removing the upstream constraint.

What this means for delivery operations

Three patterns I’m seeing:

Ship-Show-Ask triage Not everything needs the same review rigor. CSS changes and type annotations can be shipped and audited later. Database migrations and authentication logic need review before merge. Risk-based review allocation.

Review capacity as a first-class metric If 71% of teams are constrained by review throughput, then reviewer availability matters as much as compute capacity. Teams don’t track it. They should.

Containment strategies AI-generated code needs boundaries. Limit blast radius. Use feature flags. Incremental rollout. The validation layer can’t keep up with unlimited AI throughput, so you need circuit breakers.

See where you stand

If this matches what you’re seeing on your team, the DDI survey gives you a score in 60 seconds. Nine questions. Anonymous.

You get:

- Your DDI score (0-100 scale)

- Which drag categories are hitting you

- How you compare to your team size cohort

It’s a lightweight diagnostic. Not a full audit, but enough to know if review drag is your primary constraint or if something else is leaking velocity.

If your score confirms the bottleneck and you want to map the full picture—where it’s coming from, what to fix first, what the interventions look like—that’s what a Delivery Pressure Review does. Fixed scope, one week, you get a roadmap ranked by impact.

Full methodology and raw data: github.com/aizetech/delivery-drag-index

Reach me at [email protected] if you want to talk through your results or discuss a deeper diagnostic.